mirror of

https://github.com/PaddlePaddle/FastDeploy.git

synced 2026-04-22 16:07:51 +08:00

[Model] Add YOLOV8 For RKNPU2 (#1153)

* 更新ppdet * 更新ppdet * 更新ppdet * 更新ppdet * 更新ppdet * 新增ppdet_decode * 更新多batch支持 * 更新多batch支持 * 更新多batch支持 * 更新注释内容 * 尝试解决pybind问题 * 尝试解决pybind的问题 * 尝试解决pybind的问题 * 重构代码 * 重构代码 * 重构代码 * 按照要求修改 * 更新Picodet文档 * 更新Picodet文档,更新yolov8文档 * 修改picodet 以及 yolov8 example * 更新Picodet模型转换脚本 * 更新example代码 * 更新yolov8量化代码 * 修复部分bug 加入pybind * 修复pybind * 修复pybind错误的问题 * 更新说明文档 * 更新说明文档

This commit is contained in:

@@ -1,121 +1,31 @@

|

||||

[English](README.md) | 简体中文

|

||||

|

||||

# PaddleDetection RKNPU2部署示例

|

||||

|

||||

## 支持模型列表

|

||||

|

||||

目前FastDeploy支持如下模型的部署

|

||||

- [PicoDet系列模型](https://github.com/PaddlePaddle/PaddleDetection/tree/release/2.4/configs/picodet)

|

||||

目前FastDeploy使用RKNPU2支持如下PaddleDetection模型的部署:

|

||||

|

||||

- Picodet

|

||||

- PPYOLOE

|

||||

- YOLOV8

|

||||

|

||||

## 准备PaddleDetection部署模型以及转换模型

|

||||

|

||||

RKNPU部署模型前需要将Paddle模型转换成RKNN模型,具体步骤如下:

|

||||

|

||||

* Paddle动态图模型转换为ONNX模型,请参考[PaddleDetection导出模型](https://github.com/PaddlePaddle/PaddleDetection/blob/release/2.4/deploy/EXPORT_MODEL.md)

|

||||

,注意在转换时请设置**export.nms=True**.

|

||||

,注意在转换时请设置**export.nms=True**.

|

||||

* ONNX模型转换RKNN模型的过程,请参考[转换文档](../../../../../docs/cn/faq/rknpu2/export.md)进行转换。

|

||||

|

||||

|

||||

## 模型转换example

|

||||

以下步骤均在Ubuntu电脑上完成,请参考配置文档完成转换模型环境配置。下面以Picodet-s为例子,教大家如何转换PaddleDetection模型到RKNN模型。

|

||||

|

||||

### 导出ONNX模型

|

||||

```bash

|

||||

# 下载Paddle静态图模型并解压

|

||||

wget https://paddledet.bj.bcebos.com/deploy/Inference/picodet_s_416_coco_lcnet.tar

|

||||

tar xvf picodet_s_416_coco_lcnet.tar

|

||||

- [Picodet RKNPU2模型转换文档](./picodet.md)

|

||||

- [YOLOv8 RKNPU2模型转换文档](./yolov8.md)

|

||||

|

||||

# 静态图转ONNX模型,注意,这里的save_file请和压缩包名对齐

|

||||

paddle2onnx --model_dir picodet_s_416_coco_lcnet \

|

||||

--model_filename model.pdmodel \

|

||||

--params_filename model.pdiparams \

|

||||

--save_file picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx \

|

||||

--enable_dev_version True

|

||||

|

||||

# 固定shape

|

||||

python -m paddle2onnx.optimize --input_model picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx \

|

||||

--output_model picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx \

|

||||

--input_shape_dict "{'image':[1,3,416,416]}"

|

||||

```

|

||||

|

||||

### 编写模型导出配置文件

|

||||

以转化RK3568的RKNN模型为例子,我们需要编辑tools/rknpu2/config/RK3568/picodet_s_416_coco_lcnet.yaml,来转换ONNX模型到RKNN模型。

|

||||

|

||||

**修改normalize参数**

|

||||

|

||||

如果你需要在NPU上执行normalize操作,请根据你的模型配置normalize参数,例如:

|

||||

```yaml

|

||||

model_path: ./picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx

|

||||

output_folder: ./picodet_s_416_coco_lcnet

|

||||

target_platform: RK3568

|

||||

normalize:

|

||||

mean: [[0.485,0.456,0.406]]

|

||||

std: [[0.229,0.224,0.225]]

|

||||

outputs: ['tmp_17','p2o.Concat.9']

|

||||

```

|

||||

|

||||

**修改outputs参数**

|

||||

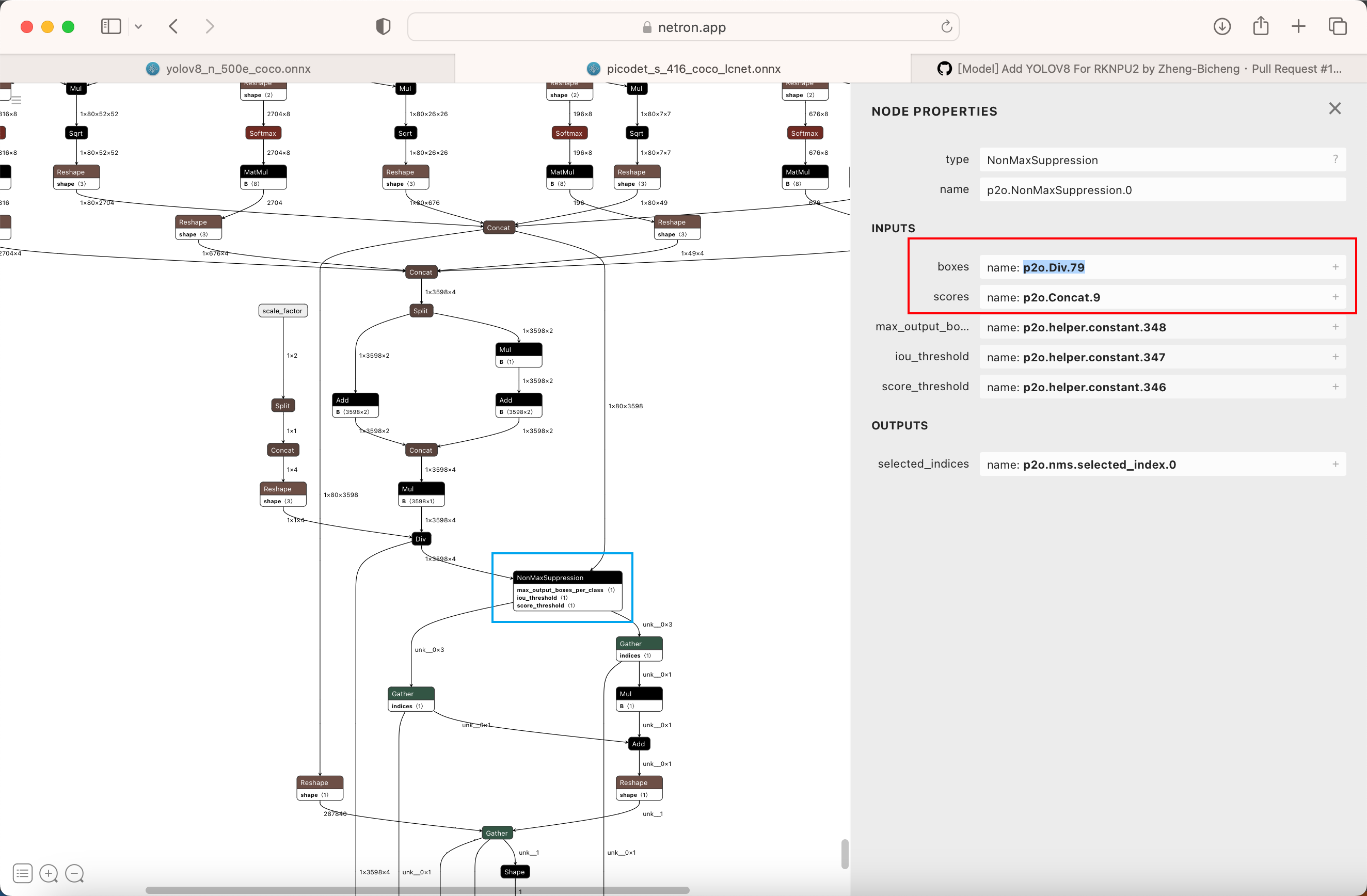

由于Paddle2ONNX版本的不同,转换模型的输出节点名称也有所不同,请使用[Netron](https://netron.app),并找到以下蓝色方框标记的NonMaxSuppression节点,红色方框的节点名称即为目标名称。

|

||||

|

||||

例如,使用Netron可视化后,得到以下图片:

|

||||

|

||||

|

||||

找到蓝色方框标记的NonMaxSuppression节点,可以看到红色方框标记的两个节点名称为tmp_17和p2o.Concat.9,因此需要修改outputs参数,修改后如下:

|

||||

```yaml

|

||||

model_path: ./picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx

|

||||

output_folder: ./picodet_s_416_coco_lcnet

|

||||

target_platform: RK3568

|

||||

normalize: None

|

||||

outputs: ['tmp_17','p2o.Concat.9']

|

||||

```

|

||||

|

||||

### 转换模型

|

||||

```bash

|

||||

|

||||

# ONNX模型转RKNN模型

|

||||

# 转换模型,模型将生成在picodet_s_320_coco_lcnet_non_postprocess目录下

|

||||

python tools/rknpu2/export.py --config_path tools/rknpu2/config/picodet_s_416_coco_lcnet.yaml \

|

||||

--target_platform rk3588

|

||||

```

|

||||

|

||||

### 修改模型运行时的配置文件

|

||||

|

||||

配置文件中,我们只需要修改**Preprocess**下的**Normalize**和**Permute**.

|

||||

|

||||

**删除Permute**

|

||||

|

||||

RKNPU只支持NHWC的输入格式,因此需要删除Permute操作.删除后,配置文件Precess部分后如下:

|

||||

```yaml

|

||||

Preprocess:

|

||||

- interp: 2

|

||||

keep_ratio: false

|

||||

target_size:

|

||||

- 416

|

||||

- 416

|

||||

type: Resize

|

||||

- is_scale: true

|

||||

mean:

|

||||

- 0.485

|

||||

- 0.456

|

||||

- 0.406

|

||||

std:

|

||||

- 0.229

|

||||

- 0.224

|

||||

- 0.225

|

||||

type: NormalizeImage

|

||||

```

|

||||

|

||||

**根据模型转换文件决定是否删除Normalize**

|

||||

|

||||

RKNPU支持使用NPU进行Normalize操作,如果你在导出模型时配置了Normalize参数,请删除**Normalize**.删除后配置文件Precess部分如下:

|

||||

```yaml

|

||||

Preprocess:

|

||||

- interp: 2

|

||||

keep_ratio: false

|

||||

target_size:

|

||||

- 416

|

||||

- 416

|

||||

type: Resize

|

||||

```

|

||||

|

||||

## 其他链接

|

||||

|

||||

- [Cpp部署](./cpp)

|

||||

- [Python部署](./python)

|

||||

- [视觉模型预测结果](../../../../../docs/api/vision_results/)

|

||||

|

||||

@@ -1,37 +1,16 @@

|

||||

CMAKE_MINIMUM_REQUIRED(VERSION 3.10)

|

||||

project(rknpu2_test)

|

||||

|

||||

set(CMAKE_CXX_STANDARD 14)

|

||||

PROJECT(infer_demo C CXX)

|

||||

CMAKE_MINIMUM_REQUIRED (VERSION 3.10)

|

||||

|

||||

# 指定下载解压后的fastdeploy库路径

|

||||

set(FASTDEPLOY_INSTALL_DIR "thirdpartys/fastdeploy-0.0.3")

|

||||

option(FASTDEPLOY_INSTALL_DIR "Path of downloaded fastdeploy sdk.")

|

||||

|

||||

include(${FASTDEPLOY_INSTALL_DIR}/FastDeployConfig.cmake)

|

||||

include_directories(${FastDeploy_INCLUDE_DIRS})

|

||||

include(${FASTDEPLOY_INSTALL_DIR}/FastDeploy.cmake)

|

||||

|

||||

add_executable(infer_picodet infer_picodet.cc)

|

||||

target_link_libraries(infer_picodet ${FastDeploy_LIBS})

|

||||

# 添加FastDeploy依赖头文件

|

||||

include_directories(${FASTDEPLOY_INCS})

|

||||

|

||||

add_executable(infer_picodet_demo ${PROJECT_SOURCE_DIR}/infer_picodet_demo.cc)

|

||||

target_link_libraries(infer_picodet_demo ${FASTDEPLOY_LIBS})

|

||||

|

||||

|

||||

set(CMAKE_INSTALL_PREFIX ${CMAKE_SOURCE_DIR}/build/install)

|

||||

|

||||

install(TARGETS infer_picodet DESTINATION ./)

|

||||

|

||||

install(DIRECTORY model DESTINATION ./)

|

||||

install(DIRECTORY images DESTINATION ./)

|

||||

|

||||

file(GLOB FASTDEPLOY_LIBS ${FASTDEPLOY_INSTALL_DIR}/lib/*)

|

||||

message("${FASTDEPLOY_LIBS}")

|

||||

install(PROGRAMS ${FASTDEPLOY_LIBS} DESTINATION lib)

|

||||

|

||||

file(GLOB ONNXRUNTIME_LIBS ${FASTDEPLOY_INSTALL_DIR}/third_libs/install/onnxruntime/lib/*)

|

||||

install(PROGRAMS ${ONNXRUNTIME_LIBS} DESTINATION lib)

|

||||

|

||||

install(DIRECTORY ${FASTDEPLOY_INSTALL_DIR}/third_libs/install/opencv/lib DESTINATION ./)

|

||||

|

||||

file(GLOB PADDLETOONNX_LIBS ${FASTDEPLOY_INSTALL_DIR}/third_libs/install/paddle2onnx/lib/*)

|

||||

install(PROGRAMS ${PADDLETOONNX_LIBS} DESTINATION lib)

|

||||

|

||||

file(GLOB RKNPU2_LIBS ${FASTDEPLOY_INSTALL_DIR}/third_libs/install/rknpu2_runtime/${RKNN2_TARGET_SOC}/lib/*)

|

||||

install(PROGRAMS ${RKNPU2_LIBS} DESTINATION lib)

|

||||

add_executable(infer_yolov8_demo ${PROJECT_SOURCE_DIR}/infer_yolov8_demo.cc)

|

||||

target_link_libraries(infer_yolov8_demo ${FASTDEPLOY_LIBS})

|

||||

|

||||

@@ -1,4 +1,5 @@

|

||||

[English](README.md) | 简体中文

|

||||

|

||||

# PaddleDetection C++部署示例

|

||||

|

||||

本目录下提供`infer_picodet.cc`快速完成PPDetection模型在Rockchip板子上上通过二代NPU加速部署的示例。

|

||||

@@ -10,50 +11,24 @@

|

||||

|

||||

以上步骤请参考[RK2代NPU部署库编译](../../../../../../docs/cn/build_and_install/rknpu2.md)实现

|

||||

|

||||

## 生成基本目录文件

|

||||

|

||||

该例程由以下几个部分组成

|

||||

```text

|

||||

.

|

||||

├── CMakeLists.txt

|

||||

├── build # 编译文件夹

|

||||

├── image # 存放图片的文件夹

|

||||

├── infer_picodet.cc

|

||||

├── model # 存放模型文件的文件夹

|

||||

└── thirdpartys # 存放sdk的文件夹

|

||||

```

|

||||

|

||||

首先需要先生成目录结构

|

||||

```bash

|

||||

以picodet为例进行推理部署

|

||||

|

||||

mkdir build

|

||||

mkdir images

|

||||

mkdir model

|

||||

mkdir thirdpartys

|

||||

```

|

||||

|

||||

## 编译

|

||||

|

||||

### 编译并拷贝SDK到thirdpartys文件夹

|

||||

|

||||

请参考[RK2代NPU部署库编译](../../../../../../docs/cn/build_and_install/rknpu2.md)仓库编译SDK,编译完成后,将在build目录下生成fastdeploy-0.0.3目录,请移动它至thirdpartys目录下.

|

||||

|

||||

### 拷贝模型文件,以及配置文件至model文件夹

|

||||

在Paddle动态图模型 -> Paddle静态图模型 -> ONNX模型的过程中,将生成ONNX文件以及对应的yaml配置文件,请将配置文件存放到model文件夹内。

|

||||

转换为RKNN后的模型文件也需要拷贝至model。

|

||||

|

||||

### 准备测试图片至image文件夹

|

||||

```bash

|

||||

wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/000000014439.jpg

|

||||

cp 000000014439.jpg ./images

|

||||

```

|

||||

|

||||

### 编译example

|

||||

|

||||

```bash

|

||||

cd build

|

||||

cmake ..

|

||||

make -j8

|

||||

make install

|

||||

# 下载预编译库,详情见文档导航处

|

||||

wget https://bj.bcebos.com/fastdeploy/release/cpp/fastdeploy-linux-x64-x.x.x.tgz

|

||||

tar xvf fastdeploy-linux-x64-x.x.x.tgz

|

||||

cmake .. -DFASTDEPLOY_INSTALL_DIR=${PWD}/fastdeploy-linux-x64-x.x.x

|

||||

make -j

|

||||

|

||||

# 下载PPYOLOE模型文件和测试图片

|

||||

wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/000000014439.jpg

|

||||

|

||||

# CPU推理

|

||||

./infer_picodet_demo ./picodet_s_416_coco_lcnet 000000014439.jpg 0

|

||||

# RKNPU2推理

|

||||

./infer_picodet_demo ./picodet_s_416_coco_lcnet 000000014439.jpg 1

|

||||

```

|

||||

|

||||

## 运行例程

|

||||

@@ -63,7 +38,9 @@ cd ./build/install

|

||||

./infer_picodet model/picodet_s_416_coco_lcnet images/000000014439.jpg

|

||||

```

|

||||

|

||||

## 文档导航

|

||||

|

||||

- [模型介绍](../../)

|

||||

- [Python部署](../python)

|

||||

- [视觉模型预测结果](../../../../../../docs/api/vision_results/)

|

||||

- [RKNPU2 预编译库](../../../../../../docs/cn/faq/rknpu2/rknpu2.md)

|

||||

|

||||

@@ -13,8 +13,8 @@

|

||||

// limitations under the License.

|

||||

#include <iostream>

|

||||

#include <string>

|

||||

|

||||

#include "fastdeploy/vision.h"

|

||||

#include <sys/time.h>

|

||||

|

||||

void ONNXInfer(const std::string& model_dir, const std::string& image_file) {

|

||||

std::string model_file = model_dir + "/picodet_s_416_coco_lcnet.onnx";

|

||||

@@ -25,7 +25,7 @@ void ONNXInfer(const std::string& model_dir, const std::string& image_file) {

|

||||

auto format = fastdeploy::ModelFormat::ONNX;

|

||||

|

||||

auto model = fastdeploy::vision::detection::PicoDet(

|

||||

model_file, params_file, config_file,option,format);

|

||||

model_file, params_file, config_file, option, format);

|

||||

|

||||

fastdeploy::TimeCounter tc;

|

||||

tc.Start();

|

||||

@@ -35,14 +35,12 @@ void ONNXInfer(const std::string& model_dir, const std::string& image_file) {

|

||||

std::cerr << "Failed to predict." << std::endl;

|

||||

return;

|

||||

}

|

||||

auto vis_im = fastdeploy::vision::VisDetection(im, res,0.5);

|

||||

auto vis_im = fastdeploy::vision::VisDetection(im, res, 0.5);

|

||||

tc.End();

|

||||

tc.PrintInfo("PPDet in ONNX");

|

||||

|

||||

cv::imwrite("infer_onnx.jpg", vis_im);

|

||||

std::cout

|

||||

<< "Visualized result saved in ./infer_onnx.jpg"

|

||||

<< std::endl;

|

||||

std::cout << "Visualized result saved in ./infer_onnx.jpg" << std::endl;

|

||||

}

|

||||

|

||||

void RKNPU2Infer(const std::string& model_dir, const std::string& image_file) {

|

||||

@@ -56,8 +54,10 @@ void RKNPU2Infer(const std::string& model_dir, const std::string& image_file) {

|

||||

auto format = fastdeploy::ModelFormat::RKNN;

|

||||

|

||||

auto model = fastdeploy::vision::detection::PicoDet(

|

||||

model_file, params_file, config_file,option,format);

|

||||

model_file, params_file, config_file, option, format);

|

||||

|

||||

model.GetPreprocessor().DisablePermute();

|

||||

model.GetPreprocessor().DisableNormalize();

|

||||

model.GetPostprocessor().ApplyDecodeAndNMS();

|

||||

|

||||

auto im = cv::imread(image_file);

|

||||

@@ -73,21 +73,24 @@ void RKNPU2Infer(const std::string& model_dir, const std::string& image_file) {

|

||||

tc.PrintInfo("PPDet in RKNPU2");

|

||||

|

||||

std::cout << res.Str() << std::endl;

|

||||

auto vis_im = fastdeploy::vision::VisDetection(im, res,0.5);

|

||||

auto vis_im = fastdeploy::vision::VisDetection(im, res, 0.5);

|

||||

cv::imwrite("infer_rknpu2.jpg", vis_im);

|

||||

std::cout << "Visualized result saved in ./infer_rknpu2.jpg" << std::endl;

|

||||

}

|

||||

|

||||

int main(int argc, char* argv[]) {

|

||||

if (argc < 3) {

|

||||

if (argc < 4) {

|

||||

std::cout

|

||||

<< "Usage: infer_demo path/to/model_dir path/to/image run_option, "

|

||||

"e.g ./infer_model ./picodet_model_dir ./test.jpeg"

|

||||

<< std::endl;

|

||||

return -1;

|

||||

}

|

||||

RKNPU2Infer(argv[1], argv[2]);

|

||||

//ONNXInfer(argv[1], argv[2]);

|

||||

|

||||

if (std::atoi(argv[3]) == 0) {

|

||||

ONNXInfer(argv[1], argv[2]);

|

||||

} else if (std::atoi(argv[3]) == 1) {

|

||||

RKNPU2Infer(argv[1], argv[2]);

|

||||

}

|

||||

return 0;

|

||||

}

|

||||

|

||||

|

||||

@@ -0,0 +1,95 @@

|

||||

// Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

|

||||

//

|

||||

// Licensed under the Apache License, Version 2.0 (the "License");

|

||||

// you may not use this file except in compliance with the License.

|

||||

// You may obtain a copy of the License at

|

||||

//

|

||||

// http://www.apache.org/licenses/LICENSE-2.0

|

||||

//

|

||||

// Unless required by applicable law or agreed to in writing, software

|

||||

// distributed under the License is distributed on an "AS IS" BASIS,

|

||||

// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

// See the License for the specific language governing permissions and

|

||||

// limitations under the License.

|

||||

|

||||

#include "fastdeploy/vision.h"

|

||||

|

||||

void ONNXInfer(const std::string& model_dir, const std::string& image_file) {

|

||||

std::string model_file = model_dir + "/yolov8_n_500e_coco.onnx";

|

||||

std::string params_file;

|

||||

std::string config_file = model_dir + "/infer_cfg.yml";

|

||||

auto option = fastdeploy::RuntimeOption();

|

||||

option.UseCpu();

|

||||

auto format = fastdeploy::ModelFormat::ONNX;

|

||||

|

||||

auto model = fastdeploy::vision::detection::PaddleYOLOv8(

|

||||

model_file, params_file, config_file, option, format);

|

||||

|

||||

fastdeploy::TimeCounter tc;

|

||||

tc.Start();

|

||||

auto im = cv::imread(image_file);

|

||||

fastdeploy::vision::DetectionResult res;

|

||||

if (!model.Predict(im, &res)) {

|

||||

std::cerr << "Failed to predict." << std::endl;

|

||||

return;

|

||||

}

|

||||

auto vis_im = fastdeploy::vision::VisDetection(im, res, 0.5);

|

||||

tc.End();

|

||||

tc.PrintInfo("PPDet in ONNX");

|

||||

|

||||

std::cout << res.Str() << std::endl;

|

||||

cv::imwrite("infer_onnx.jpg", vis_im);

|

||||

std::cout << "Visualized result saved in ./infer_onnx.jpg" << std::endl;

|

||||

}

|

||||

|

||||

void RKNPU2Infer(const std::string& model_dir, const std::string& image_file) {

|

||||

auto model_file = model_dir + "/yolov8_n_500e_coco_rk3588_unquantized.rknn";

|

||||

auto params_file = "";

|

||||

auto config_file = model_dir + "/infer_cfg.yml";

|

||||

|

||||

auto option = fastdeploy::RuntimeOption();

|

||||

option.UseRKNPU2();

|

||||

|

||||

auto format = fastdeploy::ModelFormat::RKNN;

|

||||

|

||||

auto model = fastdeploy::vision::detection::PaddleYOLOv8(

|

||||

model_file, params_file, config_file, option, format);

|

||||

|

||||

model.GetPreprocessor().DisablePermute();

|

||||

model.GetPreprocessor().DisableNormalize();

|

||||

model.GetPostprocessor().ApplyDecodeAndNMS();

|

||||

|

||||

auto im = cv::imread(image_file);

|

||||

|

||||

fastdeploy::vision::DetectionResult res;

|

||||

fastdeploy::TimeCounter tc;

|

||||

tc.Start();

|

||||

if (!model.Predict(&im, &res)) {

|

||||

std::cerr << "Failed to predict." << std::endl;

|

||||

return;

|

||||

}

|

||||

tc.End();

|

||||

tc.PrintInfo("PPDet in RKNPU2");

|

||||

|

||||

std::cout << res.Str() << std::endl;

|

||||

auto vis_im = fastdeploy::vision::VisDetection(im, res, 0.5);

|

||||

cv::imwrite("infer_rknpu2.jpg", vis_im);

|

||||

std::cout << "Visualized result saved in ./infer_rknpu2.jpg" << std::endl;

|

||||

}

|

||||

|

||||

int main(int argc, char* argv[]) {

|

||||

if (argc < 4) {

|

||||

std::cout

|

||||

<< "Usage: infer_demo path/to/model_dir path/to/image run_option, "

|

||||

"e.g ./infer_model ./picodet_model_dir ./test.jpeg"

|

||||

<< std::endl;

|

||||

return -1;

|

||||

}

|

||||

|

||||

if (std::atoi(argv[3]) == 0) {

|

||||

ONNXInfer(argv[1], argv[2]);

|

||||

} else if (std::atoi(argv[3]) == 1) {

|

||||

RKNPU2Infer(argv[1], argv[2]);

|

||||

}

|

||||

return 0;

|

||||

}

|

||||

@@ -0,0 +1,68 @@

|

||||

# Picodet RKNPU2模型转换文档

|

||||

|

||||

以下步骤均在Ubuntu电脑上完成,请参考配置文档完成转换模型环境配置。下面以Picodet-s为例子,教大家如何转换PaddleDetection模型到RKNN模型。

|

||||

|

||||

|

||||

### 导出ONNX模型

|

||||

|

||||

```bash

|

||||

# 下载Paddle静态图模型并解压

|

||||

wget https://paddledet.bj.bcebos.com/deploy/Inference/picodet_s_416_coco_lcnet.tar

|

||||

tar xvf picodet_s_416_coco_lcnet.tar

|

||||

|

||||

# 静态图转ONNX模型,注意,这里的save_file请和压缩包名对齐

|

||||

paddle2onnx --model_dir picodet_s_416_coco_lcnet \

|

||||

--model_filename model.pdmodel \

|

||||

--params_filename model.pdiparams \

|

||||

--save_file picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx \

|

||||

--enable_dev_version True

|

||||

|

||||

# 固定shape

|

||||

python -m paddle2onnx.optimize --input_model picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx \

|

||||

--output_model picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx \

|

||||

--input_shape_dict "{'image':[1,3,416,416]}"

|

||||

```

|

||||

|

||||

### 编写模型导出配置文件

|

||||

|

||||

以转化RK3568的RKNN模型为例子,我们需要编辑tools/rknpu2/config/picodet_s_416_coco_lcnet_unquantized.yaml,来转换ONNX模型到RKNN模型。

|

||||

|

||||

**修改normalize参数**

|

||||

|

||||

如果你需要在NPU上执行normalize操作,请根据你的模型配置normalize参数,例如:

|

||||

|

||||

```yaml

|

||||

mean:

|

||||

-

|

||||

- 127.5

|

||||

- 127.5

|

||||

- 127.5

|

||||

std:

|

||||

-

|

||||

- 127.5

|

||||

- 127.5

|

||||

- 127.5

|

||||

```

|

||||

|

||||

**修改outputs参数**

|

||||

由于Paddle2ONNX版本的不同,转换模型的输出节点名称也有所不同,请使用[Netron](https://netron.app)对模型进行可视化,并找到以下蓝色方框标记的NonMaxSuppression节点,红色方框的节点名称即为目标名称。

|

||||

|

||||

例如,使用Netron可视化后,得到以下图片:

|

||||

|

||||

|

||||

|

||||

找到蓝色方框标记的NonMaxSuppression节点,可以看到红色方框标记的两个节点名称为p2o.Div.79和p2o.Concat.9,因此需要修改outputs参数,修改后如下:

|

||||

|

||||

```yaml

|

||||

outputs_nodes: [ 'p2o.Div.79','p2o.Concat.9' ]

|

||||

```

|

||||

|

||||

### 转换模型

|

||||

|

||||

```bash

|

||||

|

||||

# ONNX模型转RKNN模型

|

||||

# 转换模型,模型将生成在picodet_s_320_coco_lcnet_non_postprocess目录下

|

||||

python tools/rknpu2/export.py --config_path tools/rknpu2/config/picodet_s_416_coco_lcnet_unquantized.yaml \

|

||||

--target_platform rk3588

|

||||

```

|

||||

@@ -0,0 +1,50 @@

|

||||

# YOLOv8 RKNPU2模型转换文档

|

||||

|

||||

以下步骤均在Ubuntu电脑上完成,请参考配置文档完成转换模型环境配置。下面以yolov8为例子,教大家如何转换PaddleDetection模型到RKNN模型。

|

||||

|

||||

|

||||

### 导出ONNX模型

|

||||

|

||||

```bash

|

||||

# 下载Paddle静态图模型并解压

|

||||

|

||||

# 静态图转ONNX模型,注意,这里的save_file请和压缩包名对齐

|

||||

paddle2onnx --model_dir yolov8_n_500e_coco \

|

||||

--model_filename model.pdmodel \

|

||||

--params_filename model.pdiparams \

|

||||

--save_file yolov8_n_500e_coco/yolov8_n_500e_coco.onnx \

|

||||

--enable_dev_version True

|

||||

|

||||

# 固定shape

|

||||

python -m paddle2onnx.optimize --input_model yolov8_n_500e_coco/yolov8_n_500e_coco.onnx \

|

||||

--output_model yolov8_n_500e_coco/yolov8_n_500e_coco.onnx \

|

||||

--input_shape_dict "{'image':[1,3,640,640],'scale_factor':[1,2]}"

|

||||

```

|

||||

|

||||

### 编写模型导出配置文件

|

||||

**修改outputs参数**

|

||||

由于Paddle2ONNX版本的不同,转换模型的输出节点名称也有所不同,请使用[Netron](https://netron.app)对模型进行可视化,并找到以下蓝色方框标记的NonMaxSuppression节点,红色方框的节点名称即为目标名称。

|

||||

|

||||

例如,使用Netron可视化后,得到以下图片:

|

||||

|

||||

|

||||

|

||||

找到蓝色方框标记的NonMaxSuppression节点,可以看到红色方框标记的两个节点名称为p2o.Div.1和p2o.Concat.9,因此需要修改outputs参数,修改后如下:

|

||||

|

||||

```yaml

|

||||

outputs_nodes: [ 'p2o.Div.1','p2o.Concat.49' ]

|

||||

```

|

||||

|

||||

### 转换模型

|

||||

|

||||

```bash

|

||||

|

||||

# ONNX模型转RKNN模型

|

||||

# 转换非全量化模型,模型将生成在yolov8_n目录下

|

||||

python tools/rknpu2/export.py --config_path tools/rknpu2/config/yolov8_n_unquantized.yaml \

|

||||

--target_platform rk3588

|

||||

|

||||

# 转换全量化模型,模型将生成在yolov8_n目录下

|

||||

python tools/rknpu2/export.py --config_path tools/rknpu2/config/yolov8_n_quantized.yaml \

|

||||

--target_platform rk3588

|

||||

```

|

||||

Executable → Regular

+1

@@ -258,6 +258,7 @@ class FASTDEPLOY_DECL PaddleYOLOv8 : public PPDetBase {

|

||||

Backend::LITE};

|

||||

valid_gpu_backends = {Backend::ORT, Backend::PDINFER, Backend::TRT};

|

||||

valid_kunlunxin_backends = {Backend::LITE};

|

||||

valid_rknpu_backends = {Backend::RKNPU2};

|

||||

valid_ascend_backends = {Backend::LITE};

|

||||

initialized = Initialize();

|

||||

}

|

||||

|

||||

@@ -90,11 +90,9 @@ bool PaddleDetPreprocessor::BuildPreprocessPipelineFromConfig() {

|

||||

// Do nothing, do permute as the last operation

|

||||

has_permute = true;

|

||||

continue;

|

||||

// processors_.push_back(std::make_shared<HWC2CHW>());

|

||||

} else if (op_name == "Pad") {

|

||||

auto size = op["size"].as<std::vector<int>>();

|

||||

auto value = op["fill_value"].as<std::vector<float>>();

|

||||

processors_.push_back(std::make_shared<Cast>("float"));

|

||||

processors_.push_back(

|

||||

std::make_shared<PadToSize>(size[1], size[0], value));

|

||||

} else if (op_name == "PadStride") {

|

||||

|

||||

+3

-1

@@ -10,6 +10,8 @@ std:

|

||||

- 127.5

|

||||

model_path: ./picodet_s_416_coco_lcnet/picodet_s_416_coco_lcnet.onnx

|

||||

outputs_nodes:

|

||||

- 'p2o.Div.79'

|

||||

- 'p2o.Concat.9'

|

||||

do_quantization: False

|

||||

dataset:

|

||||

output_folder: "./Portrait_PP_HumanSegV2_Lite_256x144_infer"

|

||||

output_folder: "./picodet_s_416_coco_lcnet"

|

||||

@@ -0,0 +1,9 @@

|

||||

mean:

|

||||

std:

|

||||

model_path: ./yolov8_n_500e_coco/yolov8_n_500e_coco.onnx

|

||||

outputs_nodes:

|

||||

- 'p2o.Mul.119'

|

||||

- 'p2o.Concat.49'

|

||||

do_quantization: True

|

||||

dataset: "./yolov8_n_500e_coco/dataset.txt"

|

||||

output_folder: "./yolov8_n_500e_coco"

|

||||

@@ -0,0 +1,9 @@

|

||||

mean:

|

||||

std:

|

||||

model_path: ./yolov8_n_500e_coco/yolov8_n_500e_coco.onnx

|

||||

outputs_nodes:

|

||||

- 'p2o.Div.1'

|

||||

- 'p2o.Concat.49'

|

||||

do_quantization: False

|

||||

dataset: "./dataset.txt"

|

||||

output_folder: "./yolov8_n_500e_coco"

|

||||

Reference in New Issue

Block a user